Results <- ame(Coefficient = coefs, Robust_SE = robust_se, Adj_R2 = adj_r2)ī. # Print the coefficient estimates, robust standard errors, and adjusted R-squared Robust_se <- sqrt(diag(vcovHC(model, type = "HC1"))) # Calculate heteroskedasticity robust standard errors Model <- lm(TestScore ~ STR, data = data) To run the regression model in R Studio, you can use the following code: In this exercise, you will investigate the relationship between the class size and students' performance. A detailed description of the data set is given in caschool_description.pdf. Use the data file caschool.csv for this question. Text: CAN YOU PLEASE SEND THE CODE FOR 2b, R STUDIO ONLY PLS TestScore = Î☠ + Î☡*STR + Î☢*PetEL + Î☣*LchPct + ui Is the coefficient of lunch subsidy statistically significant? Find the relevant variable from the data set and run the regression model. In addition to the percentage of English learners, we now use the percentage eligible for subsidized lunch as a control variable. Does the direction of the omitted variable bias coincide with your intuition?ĭ. Compare the coefficient estimate of STR in (a) and (b). Report the coefficient estimates, (heteroskedasticity robust) standard errors, and adjusted R2.Ĭ. Add the variable as a control variable and run the regression: Find the variable for the percentage of English learners.

Run the following regression model and report the coefficient estimates, (heteroskedasticity robust) standard errors, and adjusted R2:ī. In this exercise, you will investigate the relationship between the class size and students' performance.Ī. A detailed description of the data set is given in caschooldescription.pdf. Based on the variance-covariance matrix of the unrestriced model we, again, calculate White standard errors.SOLVED: Text: CAN YOU PLEASE SEND THE CODE FOR 2b, R STUDIO ONLY PLS It can be used in a similar way as the anova function, i.e., it uses the output of the restricted and unrestricted model and the robust variance-covariance matrix as argument vcov.

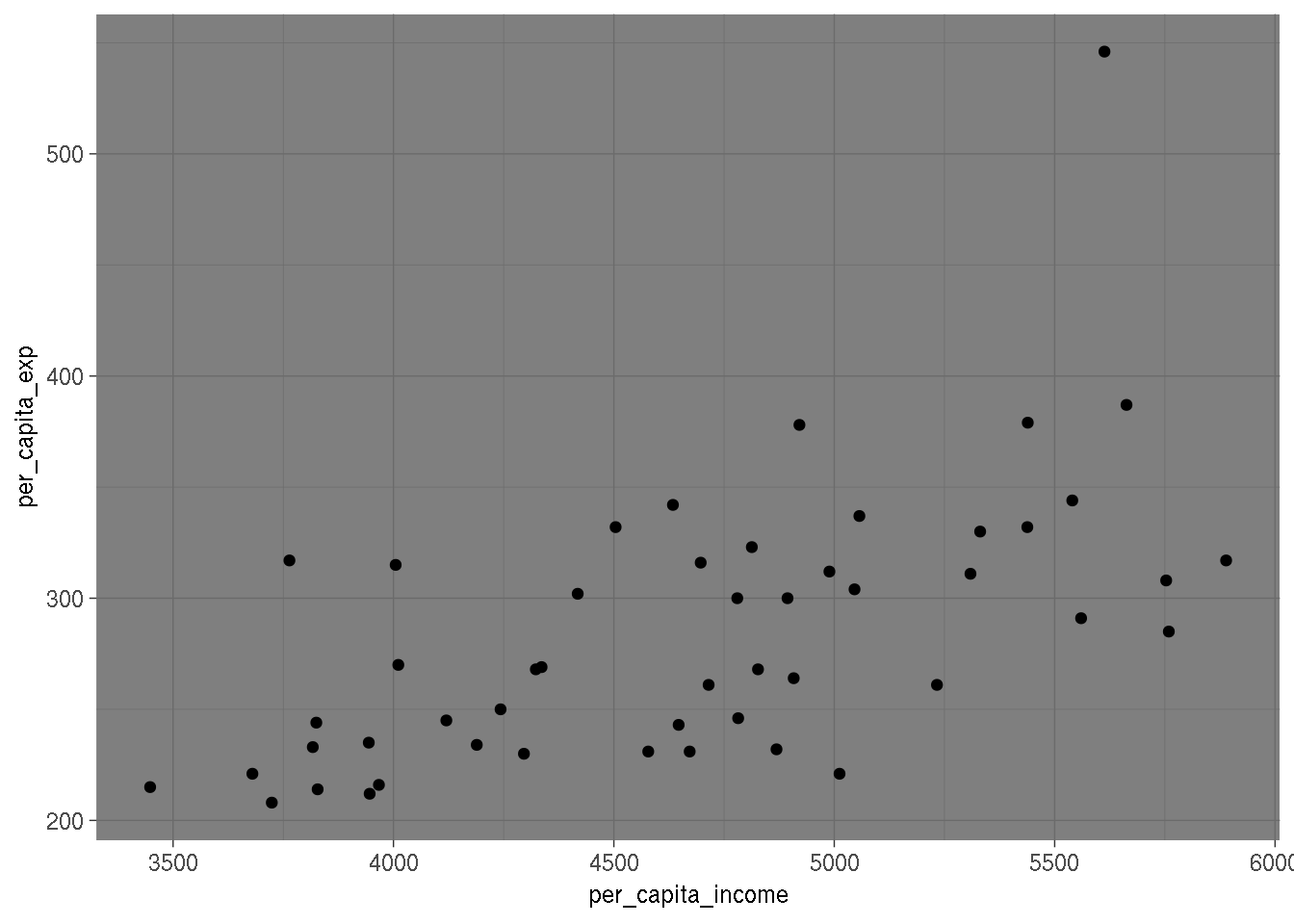

# 2 70 144286605 3 1560272 0.2523 0.8594įor a heteroskedasticity robust F test we perform a Wald test using the waldtest function, which is also contained in the lmtest package. The following example adds two new regressors on education and age to the above model and calculates the corresponding (non-robust) F test using the anova function. In the post on hypothesis testing the F test is presented as a method to test the joint significance of multiple regressors. # Load librariesĬoeftest(model, vcov = vcovHC(model, type = "HC0")) # Other, more sophisticated methods are described in the documentation of the function, ?vcovHC. In our case we obtain a simple White standard error, which is indicated by type = "HC0". The vcovHC function produces that matrix and allows to obtain several types of heteroskedasticity robust versions of it. The first argument of the coeftest function contains the output of the lm function and calculates the t test based on the variance-covariance matrix provided in the vcov argument. In R the function coeftest from the lmtest package can be used in combination with the function vcovHC from the sandwich package to do this. Since we already know that the model above suffers from heteroskedasticity, we want to obtain heteroskedasticity robust standard errors and their corresponding t values. Fortunately, the calculation of robust standard errors can help to mitigate this problem. Since standard model testing methods rely on the assumption that there is no correlation between the independent variables and the variance of the dependent variable, the usual standard errors are not very reliable in the presence of heteroskedasticity. Clustered standard errors are a common way to deal with this problem. This in turn leads to overly-narrow confidence intervals, overly-low p-values and possibly wrong conclusions. a misleadingly precise estimate of our coefficients. This is an example of heteroskedasticity. Simply ignoring this structure will likely lead to spuriously low standard errors, i.e. This means that there is higher uncertainty about the estimated relationship between the two variables at higher income levels. However, as income increases, the differences between the observations and the regression line become larger. The regression line in the graph shows a clear positive relationship between saving and income. Labs(x = "Annual income", y = "Annual savings") # Only use positive values of saving, which are smaller than income It takes a formula and data much in the same was as lm does, and all. The dataset is contained the wooldridge package. This function performs linear regression and provides a variety of standard errors. A popular illustration of heteroskedasticity is the relationship between saving and income, which is shown in the following graph.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed